Pulse Narrate

Use Pulse Narrate for deliverable voiceovers, response narration, settings, Gemini API keys, and troubleshooting.

What Pulse Narrate is

Pulse Narrate is the voiceover workflow for Pulse AI deliverables and agent responses. It generates spoken briefings for presentations, audits, and conversational responses when voice mode is enabled.

Pulse Narrate is not Pulse Record. Record is the native dictation app for capturing your speech. Narrate is the Gemini TTS workflow that turns Pulse AI-written summaries and deliverables into audio.

Section outline and media plan

| Reader question | Pulse Narrate section | Media to use |

|---|---|---|

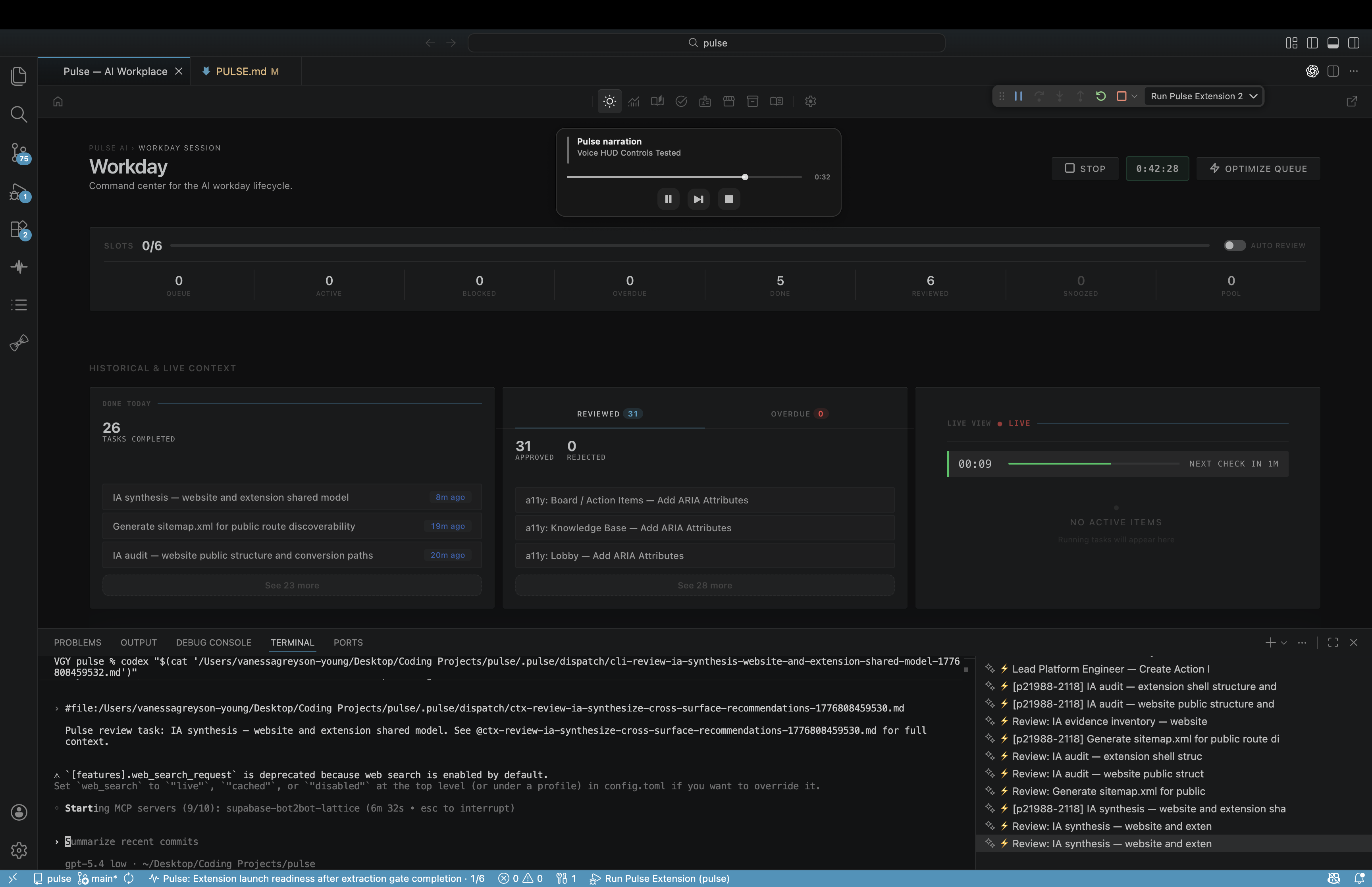

| When should I use it? | Deliverable audio proof | Voice HUD playback screenshot. |

| What does it produce? | Narration WAV bundle | Knowledge Item artifact list and generated WAV guidance. |

| What permissions are needed? | Gemini key and voice preferences | Settings and SecretStorage-backed key setup. |

| How do I recover when it fails? | Generation, quota, and playback recovery | Help Center recovery link for missing audio. |

When to use it

Use Pulse Narrate when Pulse AI needs to speak the result of work back to the operator. It is the right workflow for task closeouts, proposals, audits, presentations, review packages, and conversational responses when voice preferences require narration.

Do not use Pulse Narrate to capture the user's speech. That is Pulse Record. Do not use it to mark webpage issues. That is Pulse Annotate.

Deliverable audio proof

Pulse Narrate exists to make proof listenable. The narration should summarize what changed, what evidence was collected, what remains blocked, and where the review artifacts live. It should sound like a concise briefing, not a mechanical reading of a checklist.

For a completed task, deliverable audio proof means the HTML deck, Markdown summary, narration segments JSON, and narration WAV all live together in the same Knowledge Item artifacts folder.

Deliverable voiceover workflow

Pulse Narrate is built around a skill workflow:

- Write a natural narration script as short spoken segments.

- Save the segments JSON with a human-readable

title. - Run

.agents/skills/voice-narration/scripts/generate-narration.py. - Save the generated WAV inside the related Knowledge Item artifacts folder.

- Play or queue the file through

scripts/narration-control.js. - Keep the narration tied to the same deliverable as the HTML and Markdown artifacts.

For task deliverables, the WAV should summarize the actual evidence, changed files, blockers, and follow-on work. It should not read a bullet list mechanically or claim proof that was not collected.

What it produces

Pulse Narrate produces a narration WAV plus a source segments file. The common output pair is *-narration-segments.json and *-narration.wav. The WAV is the playable artifact; the segments file is the reproducible script that explains what was spoken.

Permissions to expect

Pulse Narrate follows workspace preferences:

voice.enabledturns voice mode on or off.voice.onPresentationscontrols narration for presentation decks.voice.onResponsescontrols narration for conversational responses.voice.onAuditscontrols narration for audit reports.voice.syncKeyAcrossWorkspacescontrols whether the Gemini key is provisioned across workspaces.

When voice mode is disabled, the voice sub-toggles are suppressed. When it is enabled for a surface, narration is part of the deliverable contract for that surface.

Pulse Narrate does not ask for a microphone permission because it does not record the user. It needs a Gemini TTS key (GEMINI_API_KEY) and follows the workspace voice preferences.

API key and billing setup

Pulse Narrate uses Gemini TTS. Configure the Gemini API key in Pulse AI Settings under Agent Output or Voice. The key is stored through the editor's SecretStorage API, which uses the operating system keychain.

When key sync is enabled, Pulse AI can provision GEMINI_API_KEY into the workspace .env file so local scripts can generate narration. Treat .env as a secret. Do not commit it or include it in screenshots.

Gemini usage may bill against your Google AI account. Pulse AI can enforce its own workflow preferences, but provider pricing, quota, and rate limits are controlled by Google.

Voice mode expectations

Voice mode is a workflow contract, not a decorative media attachment. If voice.enabled and the relevant sub-toggle are true, the response, presentation, or audit should get narration.

The narration should be concise, natural, and specific. It should explain the current state in plain language, including blockers when they exist.

Generated files

For deliverables, narration files belong inside .pulse/knowledge/<slug>/artifacts/ with the HTML deck and Markdown version. Common companion files include:

- A

*-narration-segments.jsonfile with the title, direction, and segments. - A

*-narration.wavfile generated by Gemini TTS. - The HTML presentation deck.

- The Markdown summary.

Do not place final narration artifacts in /tmp. Temporary audio fragments can be used during generation, but the final WAV belongs with the Knowledge Item.

Playback

Use the shared controller:

node scripts/narration-control.js play --file <path>node scripts/narration-control.js replay --file <path>node scripts/narration-control.js resume --file <path>node scripts/narration-control.js stopnode scripts/narration-control.js validate

The controller handles queueing, playback state, and the voice HUD on macOS.

Recover when it fails

If generation fails with a missing key, configure the Gemini key in Settings and confirm GEMINI_API_KEY is available to the workspace.

If generation fails with a provider error, retry after checking quota, billing, and rate limits in Google AI Studio.

If playback does not start immediately, another narration may already be playing. The controller can queue playback instead of interrupting the current audio.

If the HUD does not appear on macOS, run node scripts/narration-control.js diagnose and check that scripts/voice-hud.sh exists.

If a deliverable has HTML and Markdown but no WAV while voice narration is enabled for presentations, the deliverable is incomplete.

Troubleshooting belongs in Help Center once the product behavior is clear. Start with Pulse Narrate support, Pulse Narrate did not generate audio, and Pulse AI says my API key is missing or invalid.

Next: Deliverables, API Keys & Providers, and Completion Gate.